Analytics Blog

Adobe Summit 2018 Highlights

I’ve been to a few Adobe Summit’s before and even though it may sound like a clichè, this was by far the best one I have attended.

I don’t know if it was a matter of Adobe really making some strides over the last year or if I just went to all of the right summit sessions, but every session provided me with a specific action item to take back and use in my every day routine.

If I don’t come back with some clear action items for how to get there, it doesn’t really do me a whole lot of good.

It’s great to be wowed with cool visual effects, funny presentations, and utopian views of where the industry is going as a whole, but if I don’t come back with some clear action items for how to get there it doesn’t really do me a whole lot of good. This year Adobe really delivered and I got more clear direction than in previous years.

During the Keynotes, Adobe always presents the advancements they are making throughout their Experience Cloud and all of the cool things that can be done in the future. But this year they announced the resources to train more users on how to actually do those cool things in what is called the “Experience League.”

It is described as, “…a new enablement program with guided learning to help you get the most out of Adobe Experience Cloud. With training materials, one-to-one expert support, and a thriving community of fellow professionals, Experience League is a comprehensive program designed to help you become your best.”

Adobe has been making a concerted effort in the past few years to improve their documentation and enabling more people to use their tools to their full extent. It appears this is a big step in that process.

Beyond the Experience League, here are the rest of my Adobe Summit 2018 highlights as seen through the eyes of an Analyst.

Biggest Feature to come to Analytics in the Near Future

If you want any analysis beyond first or last touch attribution from Adobe, historically you’ve been out of luck. Unless, of course, you want to export the data and do the analysis yourself. Over years I have seen other analytics tools introduce multi touch attribution and subsequently release further updates to these features. Meanwhile, Adobe Analytics’ Marketing Channels Reports looks like they’re still stuck in 1997.

If you want any analysis beyond first or last touch attribution from Adobe, historically you’ve been out of luck. Unless, of course, you want to export the data and do the analysis yourself. Over years I have seen other analytics tools introduce multi touch attribution and subsequently release further updates to these features. Meanwhile, Adobe Analytics’ Marketing Channels Reports looks like they’re still stuck in 1997.

I had a glimmer of hope a few years ago when a tool called “X Attribution” was demoed at the Adobe Sneaks session, but with each passing Analysis Workspace update my hope continued to fade.

Imagine my excitement during the keynote on Monday, Adobe Analytics Evangelist and avid Philadelphia Eagles fan Eric Matisoff made his way to center stage and previewed a new panel in Analysis Workspace called “Attribution IQ.”

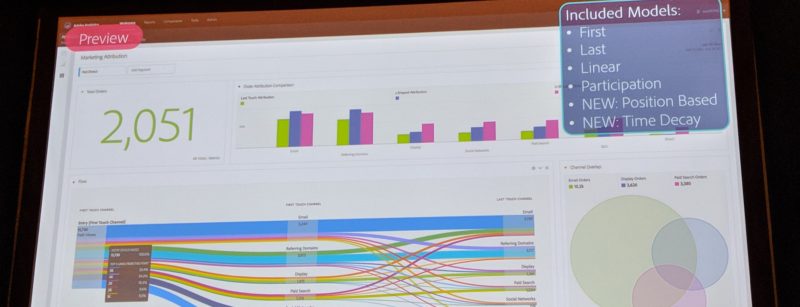

In the demonstration, it appeared that it works very similar to “Segment IQ” in that you drag a few dimensions (and a metric in this case) onto a panel and click a button and voila! Analysis!

But this one has a few more options. For example, it appears that you can select between at least the following attribution models:

- First Touch

- Last Touch

- Linear

- Participation

- J Shaped

- U Shaped

- Inverse J

- Time Decay

While there is no algorithmic option just yet, this is a massive step forward from just relying on first and last touch and ignoring the steps in between. This new tool will allow users to compare and contrast how a number of very commonly used attribution models tell a different story about their data and their customers.

Another important thing to call out with this new feature is it does allow for some very exciting customization.

For example, it appears you can change what your success metric is at any time to run this same sort of analysis but for success events such as newsletter sign ups, lead gen form completions, donations, or whatever important KPI might be applicable to your website. Finally, you can also change the look-back window based on sales cycle of your customers.

If this tool can be used for any eVar along with any success event it will be a game changer.

Adobe Data Workbench’s, Best Fit Attribution feature gives you the ability to change your touch metrics as well as the success metrics. Even though it wasn’t explicitly stated in the demo, I’d be interested in seeing if we can do the same thing in the upcoming Attribution IQ feature of Analysis Workspace and change the touch points (marketing channels in the example he gave) to something else.

For example, article views or whitepaper downloads or internal search terms. If this tool can be used for any eVar along with any success event it will be a game changer. It hasn’t come out yet so I can’t say whether or not this is the case. I’m very curious to test it out in the near future.

Honorable Mentions

One thing that was briefly mentioned was what was called “Streaming Audio Analytics.” This is something that has been lacking in the past and in order to track things like audio books, music, or podcasts we have had to hack together solutions. It sounds like there will be a standardized solution now, which is promising.

Another thing that I am very excited to play around with is the concept of changing the definition of a session on the fly in virtual report suites. The concept of a session is not always a cut and dry as 30 minutes of inactivity equals a reset. For situations such as a kiosk or a shared device there may be clearly defined actions that denote that the session needs to be reset even though there is no extended period of inactivity. Now we can identify events that are meant to reset a session.

The best part about all of this is it is through virtual report suites so it is a completely non destructive way of viewing your data.

Favorite Summit Breakout Session

I told anyone who was within earshot of me all week that hands down the best session of the entire Adobe Summit was “Advanced Analysis with Adobe Analytics and R” with Trevor Paulsen and Tim Wilson. Trevor has a blog that I regularly geek out over called The Data Feed Toolbox where he goes over some fantastic use cases with Adobe’s Data Feeds and R. In this session, Tim started out by showing how to perform some light text mining to make an actually useable word cloud with your internal search terms.

If that wasn’t enough, Trevor then went into 2 extremely useful examples of how to use R to perform some very advanced analysis with your Data Feeds.

I already gushed about how great it is that we are going to have some basic attribution modeling capabilities in Analysis workspace. So I won’t speak too much more about Trevor’s algorithmic attribution example, but I will speak briefly about his other example: building a customer propensity model.

Imagine if you could only market to users that have a high propensity score vs throwing your money away on users that have next to 0% chance of ever converting. Click & Tweet!

A propensity model is a tool that takes into account a huge amount of data and organizes your customers based on their likelihood of converting. People with a high propensity score are more likely to convert and those with a lower score are less likely to convert.

This is obviously an extremely valuable and efficient way of organizing your customers. Imagine if you could only market to users that have a high propensity score vs throwing your money away on users that have next to 0% chance of ever converting.

So Trevor briefly went through his process and then he really blew our minds. Once he had assigned a propensity score to all of his users, he was able to upload that data back into Adobe Analytics using the customer attributes feature. There are some steps required so I suggest watching his session to get the full low down.

This last step of uploading the data to Adobe Analytics completely blew the door open on this whole exercise.

Once this data is in Adobe Analytics and directly tied to each user, the possibilities are endless. You can use propensity score as a metric, in segments, with any dimension such as geolocation, browser type, first touch marketing channel, etc. Then there was the kicker! By creating 2 segments, one for those with low propensity and one for those with a high propensity score, you can then use them both in Segment IQ to compare every dimension, metric, and segment you have in your dataset to view which have the highest contribution towards having either a high or low propensity score.

The best part about Trevor’s sessions is at the end he always makes all of his code available. Next year, I strongly suggest giving his session a go.

Best “Sneaked” Feature

Every year my favorite part about the Summit is the “Sneaks” session where Adobe showcases seeing features that are either actively being worked on and will make their way to production soon, or they are still in the idea phase. This year, more than in recent years in my opinion, really stepped it up in terms of intriguing new features.

Every year my favorite part about the Summit is the “Sneaks” session where Adobe showcases seeing features that are either actively being worked on and will make their way to production soon, or they are still in the idea phase. This year, more than in recent years in my opinion, really stepped it up in terms of intriguing new features.

The sneak that wins in this category for me was called Experience Analytics. While it might not be the flashiest feature, it’s something that will help a lot of people get out of Excel (which is my life’s goal). Also, it’s something that looks like it is very close to being available in the near future.

The whole concept of Experience Analytics is that you can upload any data set to Analysis Workspace, not just digital analytics data, and use it as your visualization tool of choice. From a usability standpoint, Analysis Workspace is one of the best tools out there for exploratory analysis.

There are other tools like Data Studio that have their strengths, but they aren’t quite as simple as the drag and drop interface of Analysis Workspace.

I can’t count the number of times I’ve been asked to recreate some massive Excel document with Adobe Report Builder and after trying to convince the client that I could do it much faster in Analysis Workspace I am told it has to be in Excel because there are other data sources involved other than Adobe Analytics. If I could take these other data sources, blend them together and use Analysis Workspace as the visualization tool much like I would Tableau, Google Data Studio, or Power BI, it would save me insane amounts of time on these sort of projects.

In this sneak, Trevor (yup here’s Trevor again!) demonstrated how one could not only upload data sets to Adobe, but you could also join data sets for even more powerful insights.

A few days before March Madness started, Adobe had this initiative called “Hack the Bracket” where they uploaded a huge amount of data about each NCAA basketball team in the tournament to allow you to run your own sort of analysis when filling out your bracket.

I about lost my mind when I saw I could play around with sports data in Analysis Workspace (my two loves). I remember thinking to myself how useful this would be if I could do this with ANY data source. From what I can tell Experience Analytics is exactly how they were able to do this. Here’s hoping we don’t have to wait too long to see this feature come to production.

Honorable Mention

Another one of the best sessions at Summit is called “Too Hot for Main stage.” At this session they present some features that are still in the idea phase and allow audience members to vote on, and debate which of the ideas they would most like to see Adobe put some real man power behind. There were two features that I was particularly excited about.

- Smarter and Smarter Attribution – Basically what this one came down to was take everything that is in “Attribution IQ” and then add algorithmic attribution on top of that. I’m not sure if this feature will make it to Analysis Workspace, and if it does if it will be available to all tiers of Adobe Analytics Users or if just premium users will have access (like only premium users have access to Data Workbench). Either way, I’d love to see these more advanced features available in the Workspace interface.

- Forecasting the Future – I think we can all agree that targets in Reports and Analytics were pretty limited and not entirely useful. But at least they existed. In Analysis workspace there isn’t anything available (natively) that allows you to set targets and compare your current performance to those targets. With this forecasting ability you would be able to forecast your KPI’s into the future and compare your forecasted performance to targeted performance to see how far off you are projected to be. More importantly, there would be an “analyze” button that allows you to see the top contributing factors that would lead to you actually reaching your target. Forecasting is a common and simple bit of analysis that currently can only be done outside of analysis workspace. This feature would put an end to that.

Summit Highlight – Halee Kotara presents at Analytics Rockstar

Last year, I had the opportunity to present at the session-formerly-known-as Adobe Analytics Idol. (Check out last year’s Top Sessions at Adobe Summit 2017 blog post, and if you’re inclined, you can even watch the Idol competition.) This is a competitive session with 6 contestants presenting 2 tips and or tricks related to Adobe Analytics. The audience then votes on which ones they liked the most at the end of the presentations.

Last year, I had the opportunity to present at the session-formerly-known-as Adobe Analytics Idol. (Check out last year’s Top Sessions at Adobe Summit 2017 blog post, and if you’re inclined, you can even watch the Idol competition.) This is a competitive session with 6 contestants presenting 2 tips and or tricks related to Adobe Analytics. The audience then votes on which ones they liked the most at the end of the presentations.

It was a great experience so I was super excited to see when my teammate Halee Kotara was selected to present at this year’s summit session now renamed to Adobe Analytics Rockstar.

As she will be posting an in-depth description of her tips at a later date, I won’t go into the specifics at this time.

Here’s an overview:

- Her first tip addressed the analyst equivalent of flossing. Something we all know we need to do, but hate doing. You will have holes in your (data) teeth if you don’t do it, the consequences of not doing a thorough job can be expensive in the end, and most importantly, if the dentist (fellow analyst) asks if you did it and you lie to them they’ll always be able to tell. That’s right, I’m talking about QA. I don’t know about you but any tool/process that helps me QA faster is a welcome sight in my book. Specifically, her tip involves creating a specific analysis workspace project that helps you navigate the treacherous waters of server calls, processing rules, and context data variables whenever you are QA’ing a mobile implementation.

- Halee’s second tip reminded me a lot of the “Forecasting the Future” feature mentioned in the “Too Hot for Main Stage” session. However instead of being a natively built out feature in Analysis Workspace, she put together a series of date range specific segments and segmented calculated metrics to build a really cool way of making targets for KPI’s that are based on your past performance plus a growth percentage. Of course if Adobe decides to build out the “Forecasting for the Future” feature, we won’t need to do this roundabout method but who knows when that will be. Until then, we will have this as an option instead.

As I mentioned, Halee will go more in depth in her own Adobe Summit 2018 blog post so keep your ear to the ground and be sure to read it when it is posted!

ObservePoint/Blast Casino Night

In addition to tremendous amounts of learning, Adobe Summit 2018 provided attendees with a good amount of fun. This year Blast co-sponsored a casino night at The Public House with our partner, ObservePoint. The only thing better than the complimentary food, drinks, and chips to play games with, was the great conversations and chance to meet other people who are as geeked out about analytics as I am.

Honorable Rockstar Mention Awesomeness

Oh yeah and Beck was pretty awesome, too.