Analytics Blog

The Best Revenue Significance Calculator for A/B Testing

If you’re conducting A/B tests on your ecommerce website and are not tracking revenue, then you are missing out on a crucial component for successful testing: having the right KPI.

Tracking revenue allows your team to make effective business decisions, because you’re measuring performance in a way that actually impacts the bottom line.

So which revenue metrics should you choose?

Some common revenue metrics don’t tell the whole story, which is why we recommend using revenue per visitor (RPV). RPV measures the amount of revenue generated each time a user visits your site:

RPV = Total Revenue

Total Users

We’re about to explain:

- why revenue per visitor is such a crucial (composite) metric

- the need to rewrite the RPV’s formula to include transaction rate and AOV

- the right way to measure its statistical significance

- how to use our free online revenue significance calculator

- how to hack your way around sampled data to get the most accurate results

Why Use Revenue Per Visitor in A/B Testing?

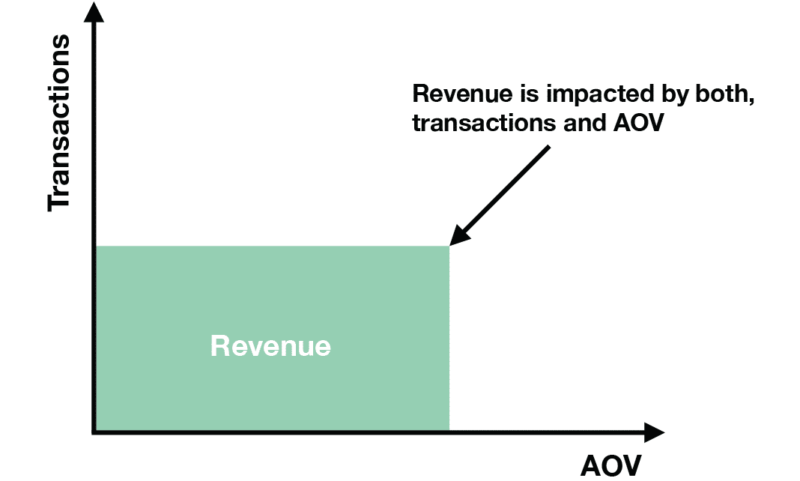

If your team tracks only transaction rate (the percentage of visitors that purchased) or average order value (AOV) as your primary metric for testing, your results are at risk of having blind spots.

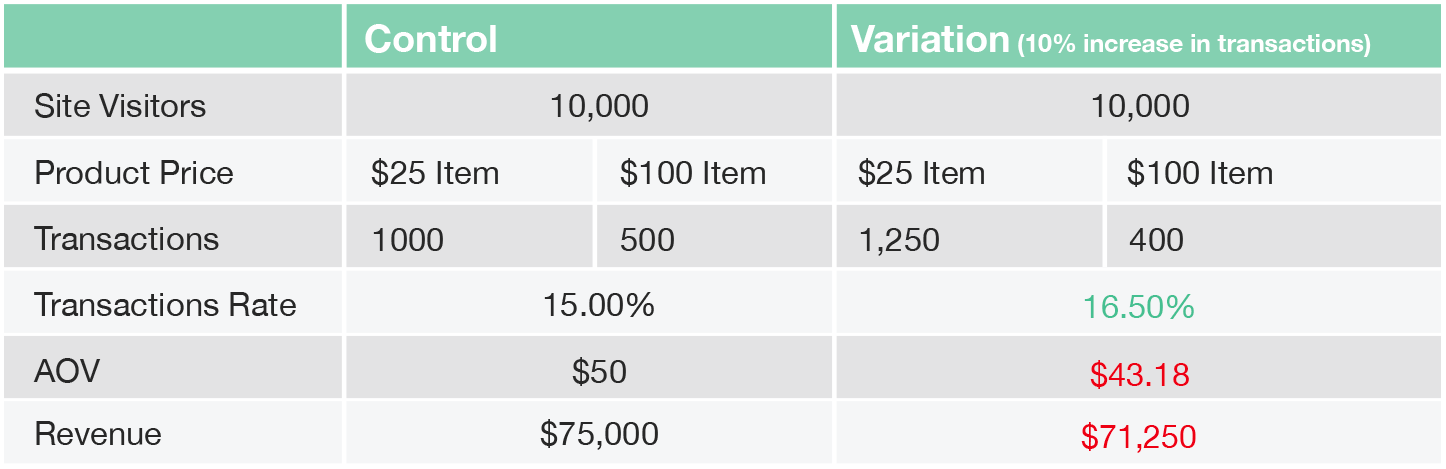

Some people assume that AOV is relatively constant and they only need to focus their efforts on increasing transaction rate in order to increase revenue. However, this logic doesn’t always apply.

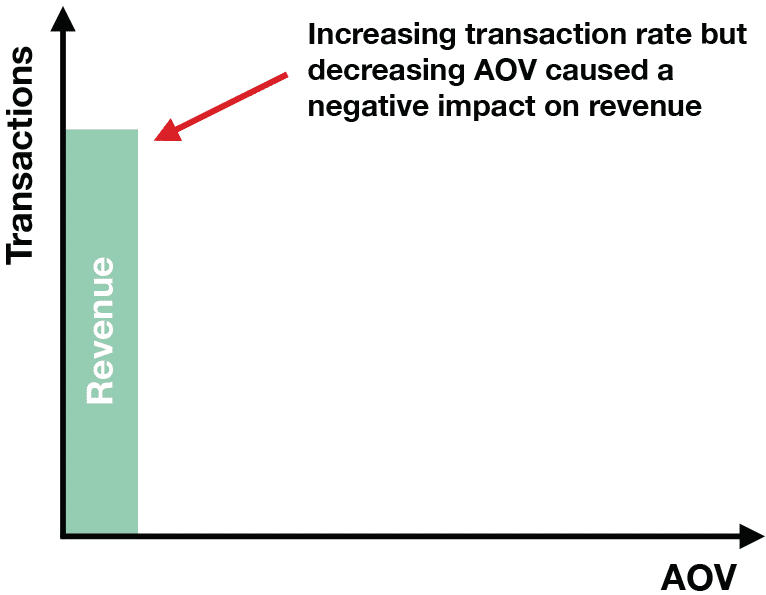

In some circumstances, increasing conversion rate can negatively affect your overall revenue.

For example, if you have a test variation that increases the conversion rate, but users choose to purchase the lower-priced product instead of the more expensive product, this can decrease AOV and overall revenue.

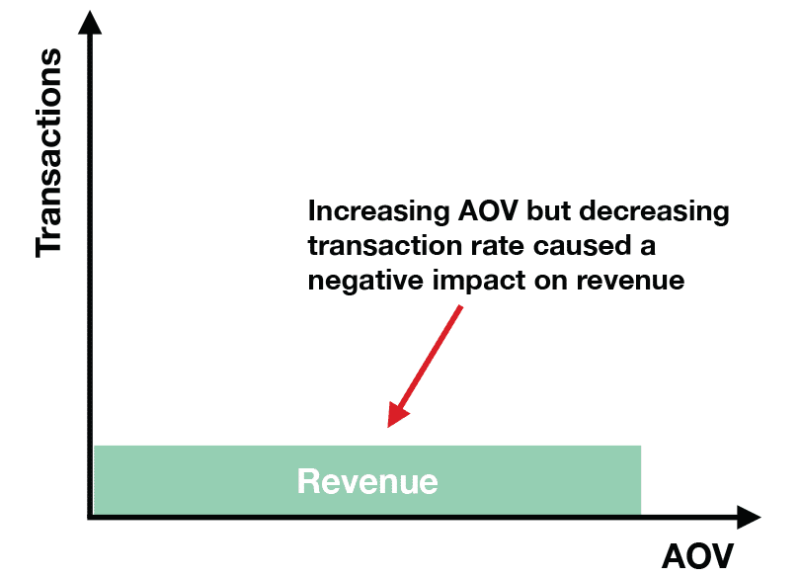

Alternatively, people may focus their efforts on improving only AOV to increase revenue which can lead to a decrease in transaction rate, ultimately hurting revenue.

For example, consider an ecommerce website test where the variation increases the spend threshold to qualify for free shipping. This can lead to a higher AOV, but can also decrease transaction rate because there may be visitors who want free shipping but don’t want to spend the extra money to qualify. As a result, they may choose not to purchase.

The examples above illustrate the need to have a solid conversion strategy for revenue that incorporates both metrics. Revenue per visitor is that composite metric, which accounts for both transaction rate and AOV Click & Tweet! .

In fact, we can rewrite the RPV’s formula to include these two elements:

Total Revenue = AOV x Transactions

Transaction Rate = Transactions/Total Users

RPV= AOV x Transaction Rate

So if your business had 1,000 transactions for every 15,000 users with an AOV of $50, the RPV would be:

Total Revenue = $50 * 1,000 = $50,000

Transaction Rate = 1000/15,000 = 0.067

RPV = $50 * 0.067 = $3.35

Monitoring trends in RPV can help your team analyze sales performance. It’s useful for evaluating your new visitor acquisition and paid user acquisition efforts.

Generally, a positive trend in RPV shows that your company’s sales efforts are working well.

However, if your revenue per visitor is trending downward, this could be the result of an increase in unqualified users to the site or potential site problems (e.g. broken shopping cart), which negatively affects your transaction rate.

Or your visitors may be converting at the same rate but are spending money on lower value items (e.g. higher priced product is out of stock), which negatively impacts your AOV.

Taking the example above, let’s say the number of users increased to 20,000 due to a social campaign that recently launched. Assuming the AOV stayed the same, your team would find that RPV is trending negatively:

Transaction Rate = 1,000/20,000 = 0.05

RPV = $50 * 0.05 = $2.50

Now let’s assume that the traffic stayed the same but your most expensive product was out of stock, causing the AOV to decrease to $37.30:

Transaction Rate = 1,000/15,000 = 0.067

RPV = $37.30 * 0.067 = $2.50

![]() RPV does not replace the need to keep an eye other metrics like AOV and transaction rate. It removes potential blind spots that can occur if you choose to track only those metrics. In essence, it gives your team a better sense of the bigger picture.

RPV does not replace the need to keep an eye other metrics like AOV and transaction rate. It removes potential blind spots that can occur if you choose to track only those metrics. In essence, it gives your team a better sense of the bigger picture.

How NOT to Calculate Statistical Significance

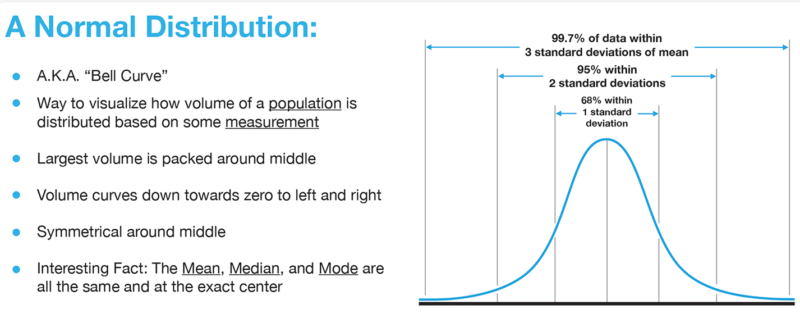

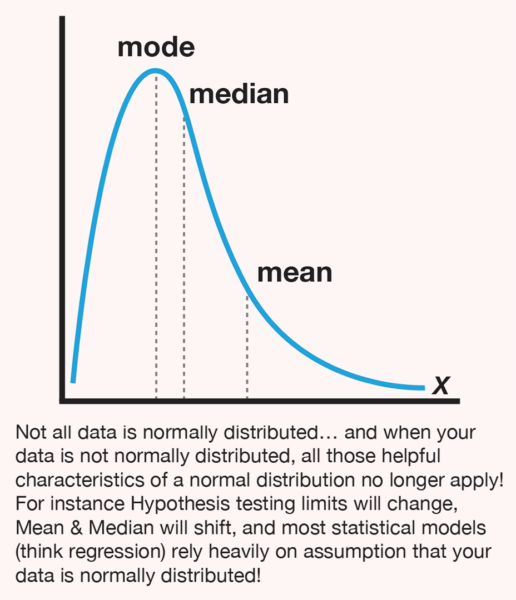

If your team is already using revenue per visitor as the main KPI for your tests, you may have figured out why you shouldn’t use the standard online revenue significance calculators to determine whether your test variation is having an actual impact on RPV. These standard “tools” perform calculations using a T-test, which operates on one critical assumption: that the metric you’re tracking follows a normal distribution.

Source: Statistics Cheat Sheet

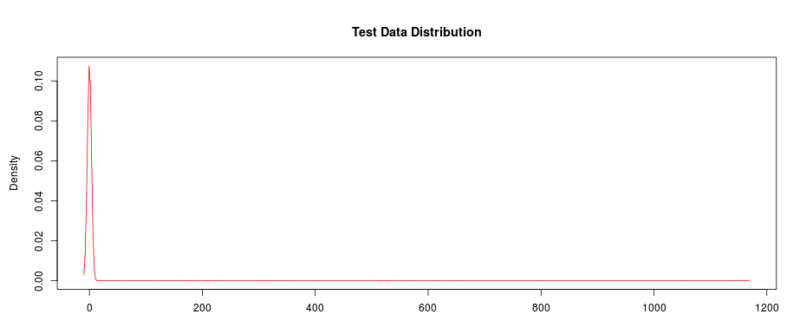

Revenue per visitor doesn’t follow a normal distribution and therefore violates this assumption, because the majority of visitors to your site will not convert or make a purchase. As a result, you’ll discover that RPV’s distribution contains a greater concentration of $0 values and there is no limit on how much a visitor can spend, which may result in your RPV data containing some extreme values.

For these reasons, RPV’s distribution tends to be right-skewed, making the standard T-test less reliable for measuring statistical significance.

The Right RPV Confidence Calculator for the Job

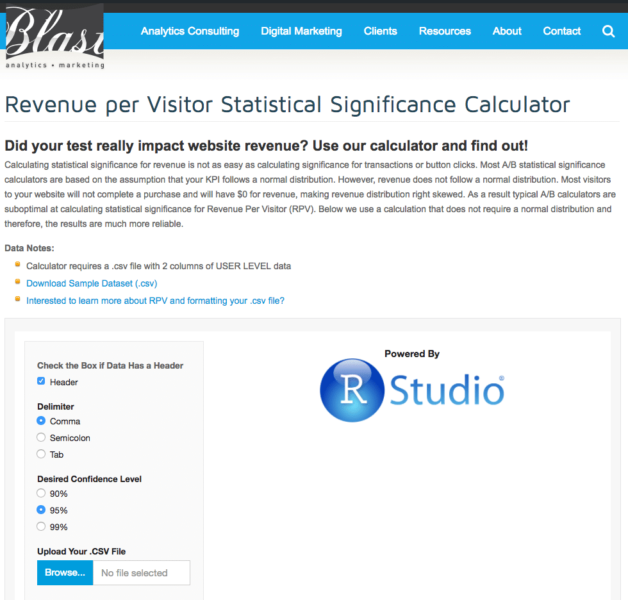

To solve this problem, we launched a free online Revenue Per Visitor confidence calculator designed specifically for calculating RPV’s statistical significance. Our RPV calculator utilizes the Wilcoxon Rank Sum Test, which is not based on the assumption that the data follows a normal distribution.

In fact, the Wilcoxon Rank Sum Test employs a non-parametric technique — a technique that does not rely on any specific distributional assumption — in order to test whether there is a difference.

This calculation is far more reliable in determining whether there is an actual impact on RPV. It includes a two-tailed calculation, so you can use it to determine whether the variation had a positive impact or a negative impact when compared to the control.

How to Use the RPV Calculator

If you take a sneak peek at our testing confidence calculator, you’ll notice it looks different from the standard statistical significance calculators.

Standard Online Calculators

Blast’s Revenue Per Visitor (RPV) Calculator

As mentioned above, you cannot simply enter total visitors and total revenue per variation to determine statistical significance.

To accurately measure whether there is an impact on RPV, you need to have user-level data.

Most businesses choose to integrate their A/B tests with their preferred analytics platform and analyze test performance there. This allows teams to make an apples-to-apples comparison when looking at performance across different channels, such as testing and marketing efforts.

The problem is that while you can see overall revenue for test variations within analytics, it is much more difficult to get access to user-level data.

Unsampled Google Analytics Data Hack

The Blast team has a solution for obtaining user-level data so you can make use of the revenue significance calculator.

It may take a little leg work in the beginning, but your team will reap the benefits for the long term. To get user-level data within Google Analytics, follow the steps below and you’ll be on your way to A/B testing success.

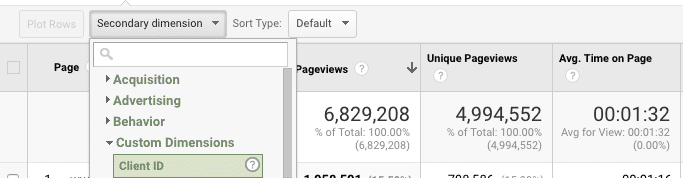

1. Create a Custom Dimension for Client ID

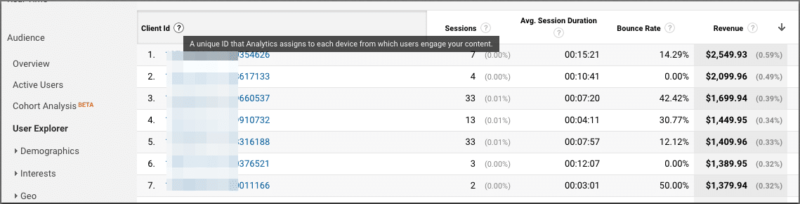

Google Analytics (GA) has recently started offering a new User Explorer report. The best part of this report is that it has a Client ID dimension that tracks user-level behavior, which is specific to browser and device.

Now the downside!

In its current state, you can’t access this dimension outside of this report, so your team can’t pull this data into a custom report.

To get around this problem, your team will need to create a custom dimension for the Client ID. This step should take roughly 1-2 hours for your analytics team to create, QA, and implement. Once this is implemented you’ll be able to use the Client ID dimension for your test reports as well other Google Analytics reports.

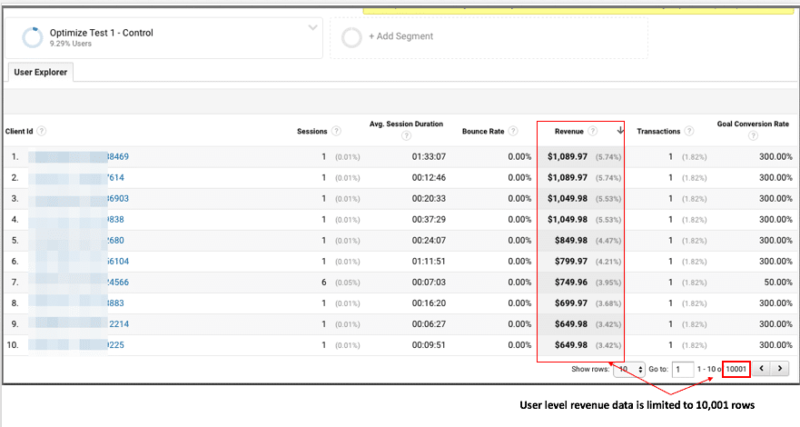

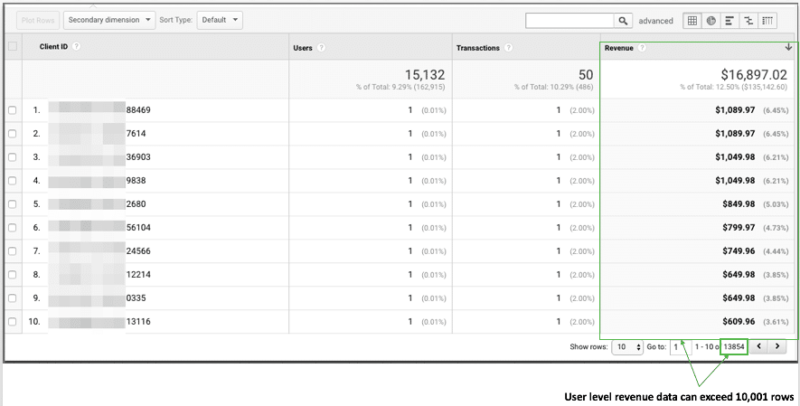

You may think this step isn’t worth the effort and that you can just export the data from the User Explorer report, but that will only work if you have minimal traffic to the site. The User Explorer report caps the data to 10,001 rows.

If your site receives more than 10,000 visitors within the time frame you select, then you won’t be able to see all user-level data and instead will get a sampling of the data. By creating the Client ID custom dimension, you can create a custom report for your test, containing the Client ID, where you’ll be able to capture all the rows of data.

User Explorer Report: Limits Client ID and accompanying revenue data to 10,001 rows.

Custom Report: Provides Client ID (via a custom dimension) and accompanying revenue data greater than 10,0001 rows.

2. Utilize unSampler to Export All Data

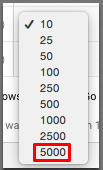

As your team uses the Client ID custom dimension within other Google Analytics reports, there is another challenge that lies ahead. Google Analytics caps the number of rows you can export at one time to 5,000 rows.

As your team uses the Client ID custom dimension within other Google Analytics reports, there is another challenge that lies ahead. Google Analytics caps the number of rows you can export at one time to 5,000 rows.

If you really have the time, you can attempt to export your report data 5,000 rows at a time, but for most people this is completely inefficient. Previous hacks like altering the number in the url to show more rows no longer work.

If your business has Google Analytics 360, then your team has the feature to export all data by utilizing the Unsampled report.

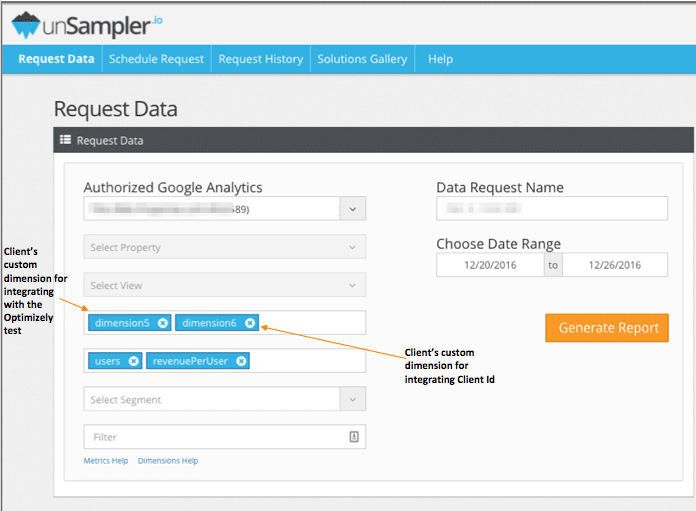

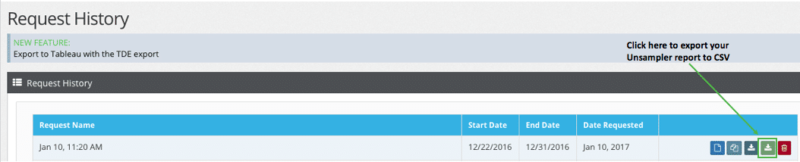

Resolve the sampling issues from the standard version of Google Analytics is as simple as creating an unSampler account and linking your Google Analytics account to it. Doing so will enable your team to easily create a test report (where you will have access to your custom dimensions) and export all of your data to CSV.

3. Format & Upload CSV

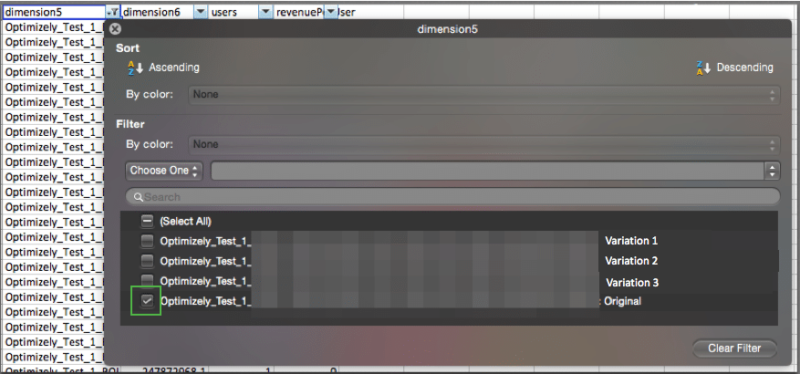

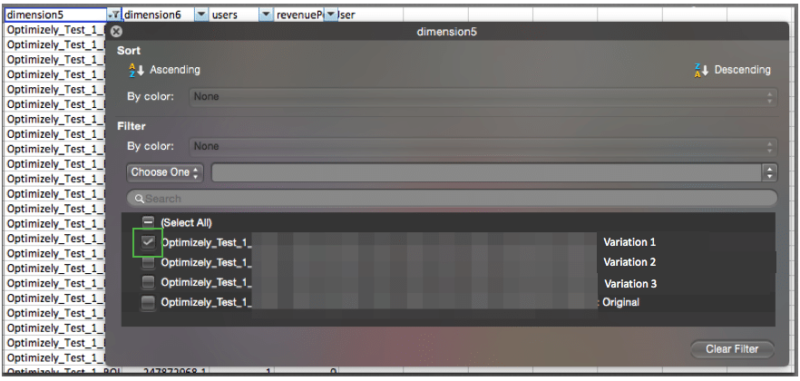

Once you’ve exported data from your unSampler Report, you’ll need to take a few quick steps to format it so it will be ready to use with the revenue significance calculator. First, you’ll need to filter your data for the control:

Then copy the revenue data and paste it in a new tab (optional: you can rename the header to Control Revenue).

Repeat this step with your test variation. After doing so, in the new tab you should have two columns for revenue (Control Revenue and Variation Revenue). Please note, if you have more than one test variation, you’ll need to create separate tabs for each one (e.g. Control vs Variation 1, Control vs Variation 2, Control vs Variation 3).

Save this new tab as a CSV file (or multiple CSV files if you have more than one test variation) and then it’s ready for the RPV Calculator.

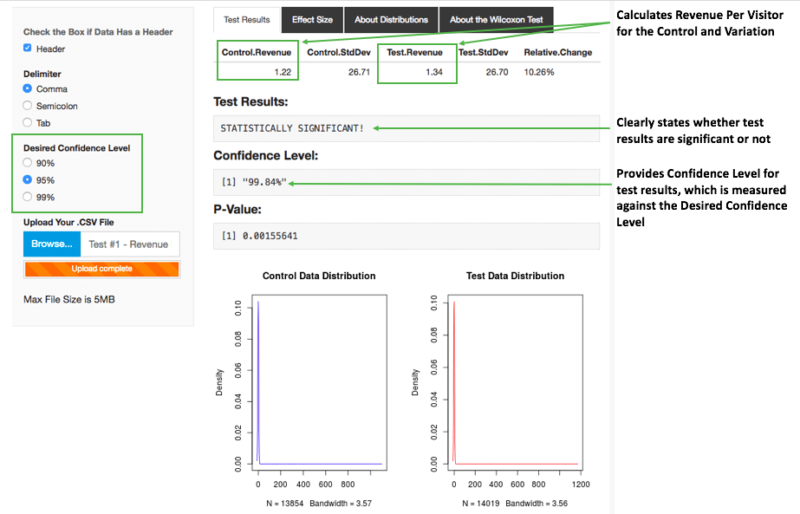

Before uploading your file to the calculator, you can adjust the threshold for determining statistical significance — the default is set at 95%. The last step is simply uploading your file.

The results you get are fast, reliable and easy to understand.

A/B Test Results You Can Trust

While it takes a little bit of effort in the beginning to properly measure revenue per visitor, once it’s set you can easily analyze this KPI for future tests. Further, by using the free online revenue significance calculator, you can trust that the correct method of analysis was applied.

Your team can rely on test performance results to make those important business decisions.

Please share your comments or let us know if you have questions regarding this process or the calculator.